Choosing the right big data processing framework can pose significant challenges for organizations looking to make sense of their vast data reservoirs. As businesses increasingly rely on data-driven decisions, how can you determine which framework will provide the best efficiency and performance? Enter Apache Spark and Hadoop, two of the most popular frameworks available today, each with unique strengths and weaknesses. Understanding the differences between them is crucial when formulating your big data strategy, as it can mean the difference between success and setbacks in your analytics endeavors.

Overview of Big Data Processing Frameworks

Definition and Importance of Big Data

Big data refers to the ever-expanding volumes of structured and unstructured information generated every moment. This data comes from various sources, including social media, sensors, transaction records, and more. The significance of big data lies in its potential to unlock valuable business insights, improve efficiency, and drive strategic decision-making. As organizations grapple with increasingly larger datasets, there arises a growing need for effective frameworks that can manage, analyze, and extract meaningful information from these data mountains.

Today’s landscape demands frameworks capable of handling varied data types and sources while ensuring that data can be processed and analyzed quickly. Without the right tools, organizations risk being buried under an avalanche of information they cannot access or leverage effectively. Therefore, selecting the right big data processing framework is a daunting yet critical step for any business seeking to harness its data’s full potential.

Key Characteristics of Big Data Frameworks

When evaluating big data processing frameworks, several essential characteristics come into play:

- Scalability: As data volumes grow, the chosen framework must efficiently scale, accommodating the increasing load without sacrificing performance.

- Speed: Real-time processing capabilities allow organizations to derive insights from data as it is generated, facilitating timely decision-making.

- Fault Tolerance: Reliable frameworks must manage faults effectively, ensuring that data processing continues seamlessly even in the face of system failures.

- Ease of Use: Intuitive interfaces and straightforward deployment empower teams, regardless of their technical backgrounds, to utilize the framework efficiently.

- Support and Community: A strong user community can enhance a framework’s reliability through shared experiences, troubleshooting assistance, and ongoing resource development.

Understanding these characteristics can help organizations identify the right framework tailored to their specific big data processing needs.

Understanding Apache Spark

Architecture of Apache Spark

Apache Spark is designed for speed and ease of use, integrating an advanced architecture that centers on in-memory processing. Its core component, the Resilient Distributed Dataset (RDD), allows Spark to handle distributed collections of data efficiently. RDDs enable developers to efficiently manipulate large datasets across clusters of machines without undergoing the tedious I/O operations typical of traditional databases.

Moreover, Spark’s ability to process data in-memory substantially speeds up computations, particularly for iterative algorithms in machine learning and data analysis. This processing speed gives Spark a distinct advantage over many other frameworks, including Hadoop MapReduce, which primarily relies on disk storage for intermediate data.

Use Cases for Apache Spark

Apache Spark shines in numerous real-world applications, particularly where speed and data processing capabilities are essential. For example:

- Machine Learning: Organizations like Netflix leverage Spark’s MLlib library, allowing them to build recommendation systems that analyze user behavior and preferences in real time.

- Real-Time Data Processing: Companies such as Uber utilize Spark Streaming for processing event data in real time, optimizing route planning and estimating ride costs almost instantly.

- Data Analytics: An example includes a financial services firm using Spark for fraud detection analytics, rapidly processing large volumes of transactional data to identify and block suspicious activities.

These scenarios demonstrate Spark’s immense versatility and performance efficiency, making it a preferred choice for businesses aiming to derive insights quickly from large volumes of data.

Understanding Hadoop

Architecture of Hadoop

At its core, Hadoop consists of a robust and adaptable framework that handles vast amounts of data through its distributed architecture. The Hadoop Distributed File System (HDFS) allows users to store large datasets across multiple machines, ensuring that even in the case of hardware failure, data remains accessible and safe.

Moreover, Hadoop’s YARN (Yet Another Resource Negotiator) resource management layer effectively schedules and manages resources across the cluster, maximizing utilization and efficiency. This architecture is geared primarily toward batch processing, allowing for the analysis of massive datasets accumulated over time.

Use Cases for Hadoop

Hadoop is particularly effective in scenarios necessitating extensive storage and batch processing capabilities:

- Data Archiving: Businesses that require long-term storage of vast datasets, such as in finance and telecommunications, use Hadoop as a cost-efficient solution to manage and archive data.

- Data Lake Implementations: Industries like healthcare utilize Hadoop to store and analyze astronomical amounts of patient data, enabling them to derive meaningful insights for better patient outcomes.

- Log Processing: Companies often use Hadoop for processing logs, providing insights on system performance, operational issues, or user interactions that help improve services and enhance user experience.

These examples emphasize Hadoop’s reliability and robustness in managing large-scale data, especially where extensive batch processing is necessary.

Apache Spark vs Hadoop: Key Comparisons

Performance Metrics

When it comes to benchmarking performance, Apache Spark and Hadoop differ significantly. Spark’s in-memory processing can lead to speeds 10 to 100 times faster than Hadoop’s MapReduce model, depending on the workload.

For instance, through various testing scenarios, Spark has demonstrated its efficiency in processing data sets in real-time, with processing times occasionally reduced from several hours (as in Hadoop) to mere minutes. This speed allows organizations to perform complex computations and analytics almost instantaneously, keeping pace with the demands of big data operations.

Statistics indicate that in benchmarks, Apache Spark processes data significantly faster compared to Hadoop, making it a compelling choice for organizations that prioritize performance in their data processing framework.

Framework Usability and Learning Curve

When evaluating usability and the learning curve for both frameworks, Apache Spark tends to be more user-friendly. Its API is designed to align with familiar programming languages like Scala, Java, and Python, making it accessible even for those with basic programming knowledge. Additionally, Spark’s comprehensive documentation and supportive community enhance usability.

Hadoop, while robust and established, often presents a steep learning curve due to its complexity. Understanding how HDFS, YARN, and MapReduce work together can be a challenge for newcomers, requiring more time to master the ins and outs of the architecture.

User feedback consistently highlights Spark’s simplicity and accessibility, contributing to rapid deployment and scaling adoption for organizations eager to adopt big data solutions.

Advantages of Apache Spark

Speed and Performance Benefits

One of the standout benefits of using Apache Spark is its unparalleled speed. With Spark’s in-memory computing capabilities, organizations can perform complex data transformations and analyses in real time, thus eliminating latency issues commonly associated with batch processing frameworks like Hadoop. The result is improved operational efficiency and faster insights from data, providing competitive advantages in terms of responsiveness to market changes, customer behaviors, and operational needs.

Additionally, this speed permits more iterative data analysis, an essential aspect of machine learning algorithms that benefit significantly from rapid testing and refinement. The impact of using a faster framework can be transformative for businesses with high data processing demands, allowing them to keep ahead in fast-paced environments.

Ecosystem and Integration

Apache Spark’s compatibility with various data sources and other frameworks adds another layer of flexibility for organizations. Spark can seamlessly integrate with Hadoop’s HDFS for data storage alongside various data lakes, NoSQL databases like MongoDB, and traditional databases.

Moreover, its extensive libraries for graph processing (GraphX), machine learning (MLlib), and stream processing (Spark Streaming) ensure that organizations can leverage a versatile toolkit that caters to diverse data processing needs. This adaptability makes Spark an attractive option for organizations looking to invest in a comprehensive big data strategy supported by numerous integrations and advanced features.

Advantages of Hadoop

Cost-Effective Storage Solutions

One of the fundamental advantages of Hadoop lies in its cost-effective approach to data storage. Utilizing commodity hardware for its clusters allows organizations to store immense amounts of data without incurring exorbitant costs.

As businesses generate and accumulate massive datasets, Hadoop facilitates long-term storage at scale, making it particularly appealing for organizations in sectors such as retail and healthcare, where data is continuously generated. This economic efficiency often translates to lower total costs of ownership, which is a significant consideration for businesses managing extensive data infrastructure budgets.

Proven Reliability in Processing

Hadoop has established a strong reputation for reliability in processing large-scale data. Its batch processing capabilities ensure that organizations can consistently execute data processing jobs without interruption, making it a go-to solution for many businesses that prioritize data integrity and processing endurance.

Large companies like Yahoo! and Facebook have utilized Hadoop not just for its storage and processing capabilities, but also due to its robust fault tolerance—enabling data processing across clusters without fear of losing valuable information during failures. The proven track record of Hadoop in effectively handling large datasets over extended periods adds to its appeal, especially among businesses that require a dependable data framework.

Conclusion

In summary, choosing between Apache Spark and Hadoop hinges on understanding their distinct advantages and use cases. Apache Spark excels in scenarios requiring speed and real-time insights, making it perfect for applications that demand immediate data processing, while Hadoop shines in large-scale storage and dependable batch processing.

As you navigate your big data strategy, evaluate your organization’s needs to make an informed decision. For businesses ready to dive into big data, leveraging a trusted solution such as Wildnet Edge can accelerate implementation and ensure you harness the full potential of your data landscape.

FAQs

Q1: What are the main differences between Spark and Hadoop?

Spark provides real-time processing, while Hadoop is more suited for batch processing tasks.

Q2: Which framework is better for big data analytics?

Apache Spark is generally preferred for its speed and in-memory capabilities, especially in analytics tasks.

Q3: Can Spark work with Hadoop’s ecosystem?

Yes, Spark can integrate with Hadoop and utilize HDFS for data storage.

Q4: Is Hadoop still relevant for big data processing in 2023?

Yes, Hadoop remains a robust solution for large-scale data storage and batch processing.

Q5: How do I choose between Spark and Hadoop for my project?

Assess your processing needs, data size, and budget to make an informed choice between them.

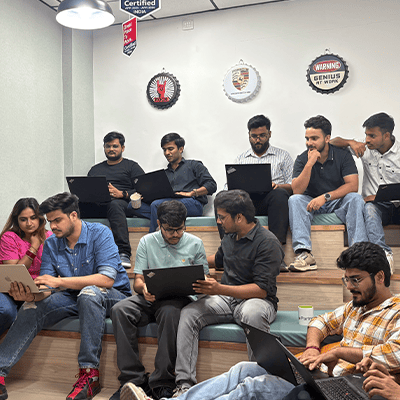

Managing Director (MD) Nitin Agarwal is a veteran in custom software development. He is fascinated by how software can turn ideas into real-world solutions. With extensive experience designing scalable and efficient systems, he focuses on creating software that delivers tangible results. Nitin enjoys exploring emerging technologies, taking on challenging projects, and mentoring teams to bring ideas to life. He believes that good software is not just about code; it’s about understanding problems and creating value for users. For him, great software combines thoughtful design, clever engineering, and a clear understanding of the problems it’s meant to solve.

sales@wildnetedge.com

sales@wildnetedge.com +1 (212) 901 8616

+1 (212) 901 8616 +1 (437) 225-7733

+1 (437) 225-7733

ChatGPT Development & Enablement

ChatGPT Development & Enablement  Hire AI & ChatGPT Experts

Hire AI & ChatGPT Experts  ChatGPT Apps by Industry

ChatGPT Apps by Industry  ChatGPT Blog

ChatGPT Blog  ChatGPT Case study

ChatGPT Case study  AI Development Services

AI Development Services  Industry AI Solutions

Industry AI Solutions  AI Consulting & Research

AI Consulting & Research  Automation & Intelligence

Automation & Intelligence